An unusual tool for unused code

The problem

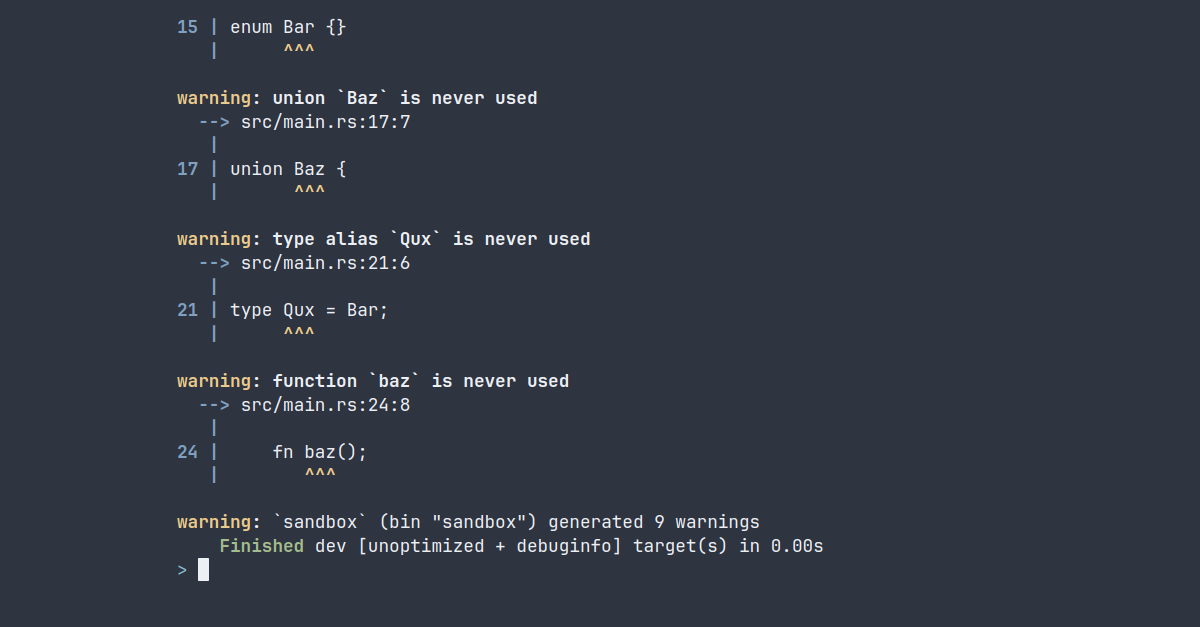

In binary crates in particular, it's not uncommon to get compiler warnings about unused code. For manually written code, the unused parts will usually serve a purpose later on. But for automatically generated functions, such as those created by the bindgen crate, these warnings will keep drowning out other compiler output until you manually remove the offending code, which takes just a bit too long for our tastes. Therefore, it was time to spend even more time trying to automate it.

Of course,

#[allow(unused)]also exists, but this tool was created to minifybindgenfunctions for sudo-rs. For such a security-critical application, having a bunch of unused code in there is unnecessarily risky.

Introducing...

Over the course of a few days, we created cargo-minify, a command-line tool that removes unused code. It comes with a diverse list of awesome features:

- Removing unused constants

- Removing unused functions

- Removing unused associated functions

- Removing unused struct definitions

- Removing unused enums

- Removing unused unions

- Removing unused type aliases

- Removing unused macro definitions

- Removing empty

modblocks - Removing empty

implblocks - Removing empty

externblocks - Git integration to make sure you don't accidentally delete your entire codebase without a way back

Demo

cargo-minify is extremely simple to use, just run it in the root of your project and it will remove all unused code it can find:

To finetune which crates to minify, and which kinds of minifications need to be applied, see cargo minify --help.

Project overview

Removing code wasn't our main goal; learning how to make such a tool is also valuable. Making a cargo subcommand is as simple as prefixing the crate name with cargo-, but between reading compiler output and parsing code for unused/empty structures, there seemed to be more than enough interesting things to do.

So let's quickly go over each of the major components of this project.

Parsing arguments

For parsing command-line arguments, we decided to use the gumdrop crate, because we wanted to try something other than clap. gumdrop is a lightweight command line argument parser that uses a derive macro to define the program's input. It works very similarly to clap with the derive macro approach, but uses only proc-macro2 and syn as dependencies. Since this is a project that minimizes projects, minimizing the project itself seemed like a fun arbitrary goal that we completely ignored other than with this particular choice. gumdrop works like a charm, though!

Workspace resolution

Since this tool is quite similar to other Cargo subcommands, such as cargo fix and cargo fmt, we decided to steal most of take inspiration from their code. Most of them run the cargo metadata command behind the scenes. Given the Cargo.toml file at the root of the project, cargo metadata displays all crates present in the current workspace, whether those are binaries, libraries, tests or examples, and a whole bunch of other data we don't really need. Combining this with a few flags in the command-line tool allows us to specify exactly which targets should be minified. By default, only the root package will be targeted.

Reading compiler output

Now that we know which packages to minify, it's time to determine which parts of the code are actually unused. At a glance, that appears to be quite simple, because the compiler already warns us about instances of unused code. However, there are things that can be considered unused but are not flagged as such by the compiler, and there are things that the compiler does complain about but likely shouldn't be removed.

For instance, the compiler doesn't warn about unused public items under any circumstance. This is important for libraries, as dependent crates might still want to use them, but it doesn't make much sense for binaries or examples. Another example is unused trait implementations. Being able to purge unused derives might be useful to speed up compilation times in big projects, but checking whether trait implementations are used or not is not so simple (though it would be a great addition to the extensive list of features above).

On the other hand, we have unused variables, struct fields, and enum variants. These are checked by the compiler, but require more work to remove effectively, and are conceptually illogical to remove in some cases. The warning generated by unused variables is the same as the warning generated by unused function parameters, which cannot be removed when implementing a function from a trait. Moreover, all calls to the function would also need to have that argument removed, which in turn can lead to more unused variables. cargo fix already handles this warning by prefixing the variable with an underscore, so we went ahead and ignored this problem entirely.

Purging struct fields that are written to but never read is also difficult, as we would need to remove all writes as well. However, structs and enums are conceptually created and named to represent some type of data, and removing part of that data, used or not, can lead to it not truly representing that data anymore. For instance, a Rectangle with only a width attribute can hardly be considered a rectangle. In that sense, the fields are used, just not in a way the compiler can verify. This way of thinking also conveniently saved us from a lot of extra work.

Another, more obscure example of code that is difficult to remove, would be a couple of macro invocations that each generate two constants: one that is used, and one that is only used for the first invocation. The compiler will give an unused-warning about the second constant generated by the second invocation, but removing it is not possible.

Considering our use case and the amount of time we had, we decided to support minifying most of the unused-warnings generated by the compiler that are not fixed by cargo fix and not generated by a macro, and allow removing some instances of empty blocks as well. The compiler doesn't warn us for the latter, so it would be a fun exercise to parse the code ourselves.

To get the compiler output in machine-readable format, we can run cargo build --message-format json. This will return all compiler output, including warnings, the code they apply to, and the suggested way to fix them if available. The suggested fix is used by the cargo fix command, which will replace the spanned code with the suggestion. Ideally, we would simply set the suggested fix to be an empty string, and then have cargo fix remove it for us. However, all of the warnings for unused code only point at the identifier of that code, not the entire struct, enum, function, or whatever. Therefore that approach would only remove the name and leave invalid syntax. So, in the end, we simply keep track of a list of identifiers and what kind of construct they are, and pass that on to the next step to remove it along with empty blocks.

Parsing the syntax

The initial quick-and-dirty approach here was to find the identifier of the unused constant/function/struct/enum/union/alias/whatever, manually look for the last token of the previous item and the first token of the next, and then remove everything in-between. This already worked well for most of our tests. The hardest part was to leave a normal amount of newlines and indentation, so that the code would still pass cargo fmt --check if it did so previously.

However, our way of finding the last token of the previous item and the first token of the next was not fool-proof. Items usually end with either a ; or a }, so the last token of the previous item is usually the last occurrence of these characters before the unused identifier. Finding the first token of the next item is more difficult, because those can start with const, fn, struct, enum, union, alias, #[an_annotation], /// a doc comment, /** a different doc comment */, pub, mod, use, macro_rules!, and probably numerous other things. Moreover, if we want to minify an enum, for instance, we first have to ignore everything between the enum's curly braces. And within those curly braces can be even more curly braces, so simply finding the first } won't work. And did you know that you can place comments between pretty much every single word? Those comments can even be /* nested /* like */ this */. And if there are any curly braces within those comments, they must be properly ignored or you're jumping out of the frying pan and into the fire.

For a more sophisticated attempt, we parse each file that contains unused structures using the syn crate. Doing so allows us to look through the abstract syntax tree for the identifiers that should be removed, and retrieve the span of the entire item with a single method call. Then we simply snip that part out of the code, use a bunch of arcane tricks to leave an appropriate amount of whitespace in between, and done! A much less fragile approach.

Try it now!

The tool is obviously open source, and the source code can be found here. If you have any feedback or ideas, feel free to create an issue. Mandatory disclaimer: we're not responsible for accidental deletion of entire codebases.