WebVR and Three.js

On each side of the cube we print the name and direction of the axis towards which the side is facing. Lets call this cube the "orientation cube", and lets call the 3D scene "the world" because that is what it is from the user's perspective. Both the orientation cube and the world are directly added to the root scene, which is the scene you create with the code rootScene = new THREE.Scene().

When wearing an Oculus, you are positioned in the middle of this orientation cube and you can look to all sides of the cube by moving your head. You can move around in the world with the arrow keys of your keyboard.

The API

To get the rotation and position data of the Oculus using javascript, we first query for VR devices:

if(navigator.getVRDevices){

// getVRDevices returns a promise

navigator.getVRDevices().then(

// on fulfilled callback returns an array containing all detected VR devices

function onFulFilled(data){

detectedVRDevices = data;

onFulfilled(deviceData);

}

);

}

The detected VR devices can be instances of PositionSensorVRDevice or instances of HMDVRDevice.

PositionSensorVRDevice instances are objects that contain data about rotation, position and velocity of movement of the headset.

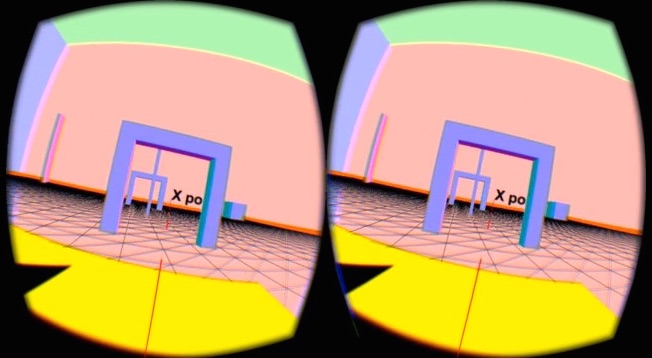

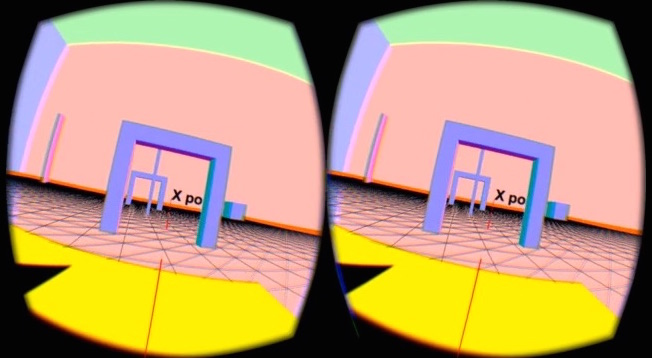

HMDVRDevice instances are objects that contain information such as the distance between the lenses, the distance between the lenses and the displays, the resolution of the displays and so on. This information is needed for the browser to render the scene in stereo with barrel distortion, like so:

To get the rotation and position data from the PositionSensorVRDevice we need to call its getState() method as frequently as we want to update the scene.

function vrRenderLoop(){

let state = vrInput.getState();

let orientation = state.orientation;

let position = state.position;

if(orientation !== null){

// do something with the orientation,

// for instance rotate the camera accordingly

}

if(position !== null){

// do something with the position,

// for instance adjust the distance between the camera and the scene

}

// render the scene

render();

// get the new state as soon as possible

requestAnimationFrame(vrRenderLoop);

}

Putting it together

For our first application we only use the orientation data of the Oculus. We use this data to set the rotation of the camera which is rather straightforward:

let state = vrInput.getState();

camera.quaternion.copy(state.orientation);

Usually when you want to walk around in a 3D world as a First Person you move and rotate the camera in the desired direction, but in this case this is not possible because the camera's rotation is controlled by the Oculus. Instead we do the reverse; keeping the camera at a fixed position while moving and rotating the world.

To get this to work properly, we add an extra pivot to our root scene and we add the world as a child to the pivot:

camera

root scene

↳ orientation cube

↳ pivot

↳ world

The camera (the user) stays fixed at the same position as the pivot, but it can rotate independently of the pivot. This happens if you rotate your head while wearing the Oculus.

If we want to rotate we world, we rotate the pivot. If we want to move forward in the world, we move the world backwards over the pivot, see this video:

You can try it yourself with the live version; the arrow keys up and down control the translation of the world and the arrow keys left and right the rotation of the pivot. The source code is available at GitHub.

About the camera in Threejs

The camera in Threejs is on the same hierarchical level as the root scene by default. Which is like a cameraman who is filming a play on a stage while standing in the audience; theoretically both the stage and the cameraman can move, independently of each other.

If you add the camera to the root scene then it is like the cameraman stands on the stage while filming the play; if you move the stage, the cameraman will move as well.

You can also add a camera to any 3D object inside the root scene. This is like the cameraman standing on a cart on the stage while filming the play; the cameraman can move independently of the stage, but if the stage moves the cameraman and her cart will move as well.

In our application the camera is fully controlled by the Oculus, so the first scenario is the best option.

This is comes in handy, since we have applied rotations to the root scene (see in this post). As a consequence, if we add the camera to the scene, the rotations of the scene will have no effect. Here is an example of a situation whereby the scene rotates while the camera is added to that same scene:

Note that in most Threejs examples you find online it does not make any difference whether or not the camera is added to the root scene, but in our case it is very important.

The result

We have made two screencasts of the result from the output rendered to the Oculus: